Both concept work together and are a way to avoid having pods running onto inappropriate nodes. tolerations: Tolerations work with the concept of taint.From there, schedule the DAG by turning ON the toggle and trigger it manually by clicking on “Trigger DAG” as shown below. Now let’s go back to the user interface of Airflow and click on the DAG example_kubernetes_executor.py.

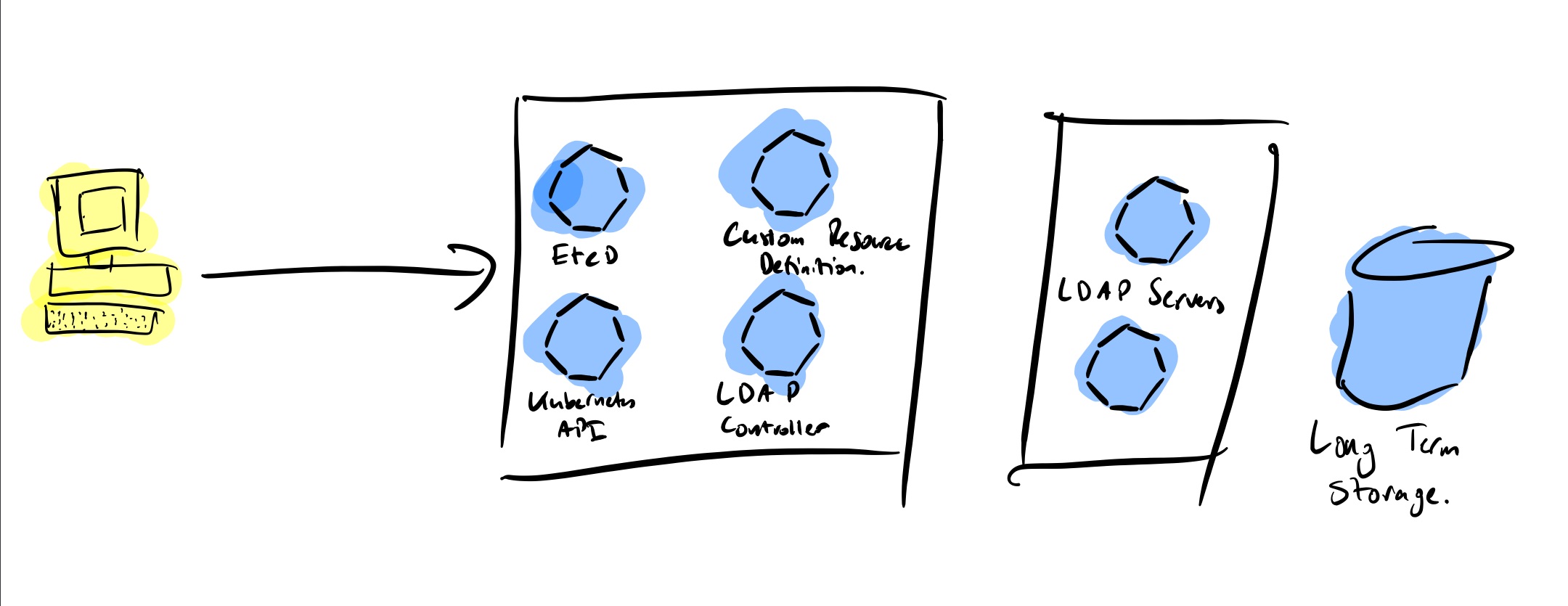

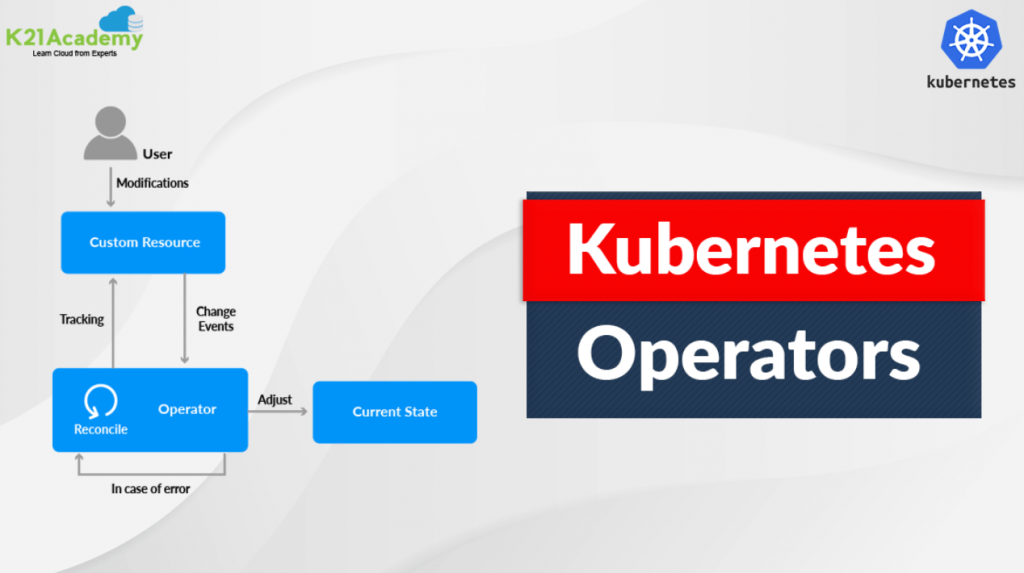

Type Ctrl+D to close the shell session and exit the container. Think of a worker node as being a POD in the context of Kubernetes Executor. Like with the Celery Executor, Airflow/Celery must be installed in the worker node. This Docker image must have Airflow installed otherwise it won’t work. This allows to avoid conflicts and makes updates easier.Ī very important point to keep in mind is when you specify a Docker image to use like we did in the previous tasks. Why it so nice? Because you can use an image having only the required dependencies to execute one task and not every dependencies for all tasks of your DAG. It means that when PODs get created, they are going to first pull their docker image according to this parameter. As you can see, we tell the operator that we are going to use the KubernetesExecutor with a special Docker image for task one and two. The interesting part here is actually the parameter executor_config. All using the PythonOperator to execute python callable functions, either print_stuff or use_vim_binary. You have three tasks here (we will see the last one later). You may ask, what actually does this code? Well it’s fairly easy. By the way, if you want learn more about Airflow and have a special promotion, just click right here. KubernetesExecutor, which is quite new and allows you to run your tasks using Kubernetes and so makes your Airflow cluster elastic to your workload in order to avoid wasting your precious ressources.It’s up to you to choose either Dask or Celery according to the framework fitting the most your needs. DaskExecutor, Dask in another Python distributed task processing system like Celery.It is the recommended way to go in production since you will be able to absorb the workload you need. All the distribution is managed by Celery. You basically run your tasks on multiple nodes (airflow workers) and each task is first queued through the use of a RabbitMQ for example. CeleryExecutor allows you to horizontally scale your Airflow cluster.Scale quite well (vertical scaling) and can be used in production. LocalExecutor which runs multiple subprocesses to execute your tasks concurrently on the same host.

Recommended for debugging and testing only.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed